The dead can’t tell their stories. Even when their accounts are documented, they are often given less credence than the accounts of survivors who still exist to share theirs. Simply put, survivorship bias is a logical fallacy in which we concentrate on the accounts of those who have made it […]

It doesn’t matter the topic, there will always be a glut of information at our fingertips. Right now, the topic is the novel coronavirus, its spread, its mortality rate, and the measures put in place to limit transmission. As usual, there is good information and plenty of bad information. People […]

Woodturning is the craft of spinning wood on a lathe and using tools and abrasives to shape it into a finished product. Penturning is a subset of this focused specifically on creating handmade pens with a lathe. Before I purchased my first material, I did a lot of research and […]

Two of my favorite linguistic devices are the garden path sentence and the paraprosdokian. You’ve likely encountered these many times in the past, even if you didn’t know how they were categorized. They are both sentences that cause the reader to reconsider information as the sentence is being read. For […]

You’ve written a thoughtful post or comment speaking against a particular policy or practice. You’ve done your best to contribute meaningfully to the discussion by avoiding logical fallacies and substantiating claims with evidence. Then someone responds… I didn’t see you crowing on about this issue/policy when [other politician] did it! […]

Merriam-Webster defines bias as “a personal and sometimes unreasoned judgment.” We should look for media outlets that don’t do this then, right? I would argue that the presence of bias should not immediately render a media outlet as unacceptable. We, as readers, should be able to recognize bias when we […]

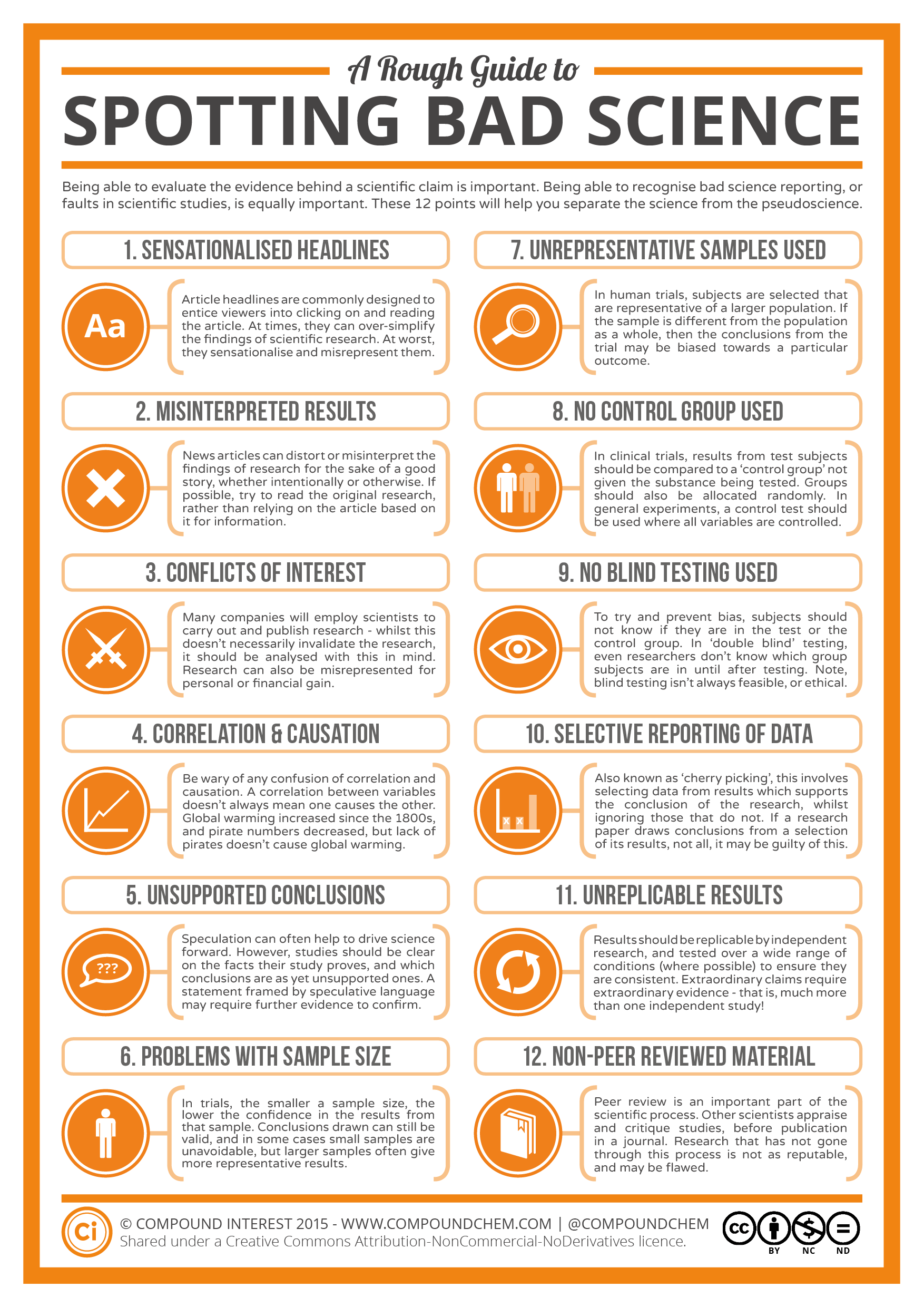

YOU WON’T BELIEVE WHICH SUPERFOOD WILL [[insert claim here]]! When it comes to medical science and health, be extra careful about the sources you share from, who is attaching their name to the articles, and what research they are linking to. There is a dangerous habit of media outlets of […]

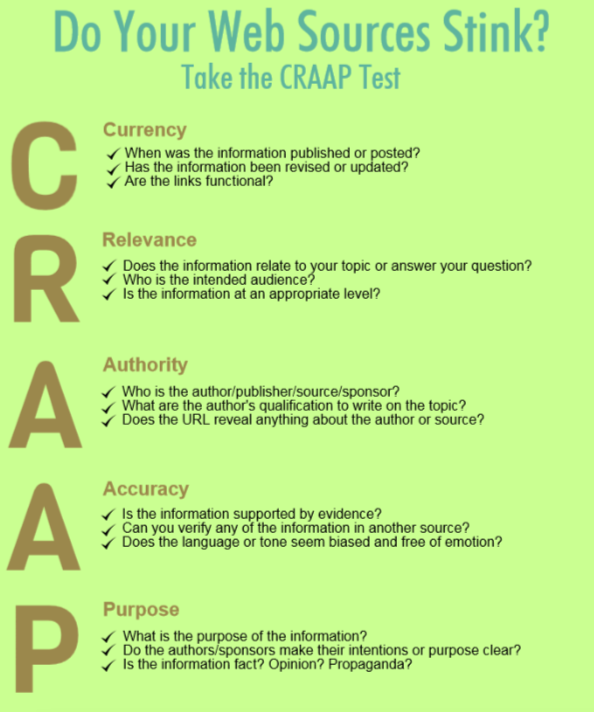

Is your source CRAAP? The CRAAP test was designed by librarians at CSU Chico to examine a source of information. It looks at the areas of Currency, Relevance, Authority, Accuracy, and Purpose as a means of separating the good from the bad. Sharing a picture with words on it on […]

I live in a remote village in Alaska that is only accessible by air. I suppose you could get here by boat, but that would be both costly and dangerous. I live in a hub village that gets passenger jet service twice daily from Alaska Airlines. Living in a hub […]